Day 78 of #100daysofnetworks

Verdant Eye: Five Minute Local OSINT

This is so cool to write. I’m going to keep this short.

In October, I created the beginnings of what would become the Verdant Eye because I wanted to have high-level situational awareness into my local area. I wanted to build something that would be useful to myself, my friends, and my community. So, initially, I built this for Portland, Oregon.

Anyway, it was too easy to build this out for Portland, so I opened it vision to the entire USA, and now we are beginning to expand into Europe. I can expand into any region. I have already crawled practically the entire internet several times over in previous years. I am not new to this. I wrote a lot about crawling in 2019.

I was bored about half an hour ago, so I thought I’d do a little experiment and see what I can see in my local area. I’m tired. I don’t want to do a big ol’ Data Science experiment right now. I just want to look at some stuff and then go play a video game.

I’ll save you some time: I did local OSINT in five minutes.

FIVE MINUTES, because my platform makes it that easy to get what I wanted to get. I’m learning more about my system as I use it, but I wasn’t expecting it to be that easy.

I’ll just use this as a quick walkthrough and freestyle this article.

Code Walkthrough

Just like last time (and every time, forever), I ran minimal code to get what I wanted.

question = 'what is happening in Oregon in March 2026?'

answer = ask_api(client, question)Looking at my one preview output, I see this:

{'claim': 'Dante Moore is lobbying the Oregon governor to support mental health and reveals his own struggles.',

'url': 'https://nypost.com/2026/03/17/sports/dante-moore-lobbies-oregon-gov-to-support-mental-health-reveals-own-struggles/',

'domain': 'nypost.com',

'url_domain': 'nypost.com',

'date_added': '2026-03-17 04:12:56'}That’s just sample data showing me that I got stuff back.

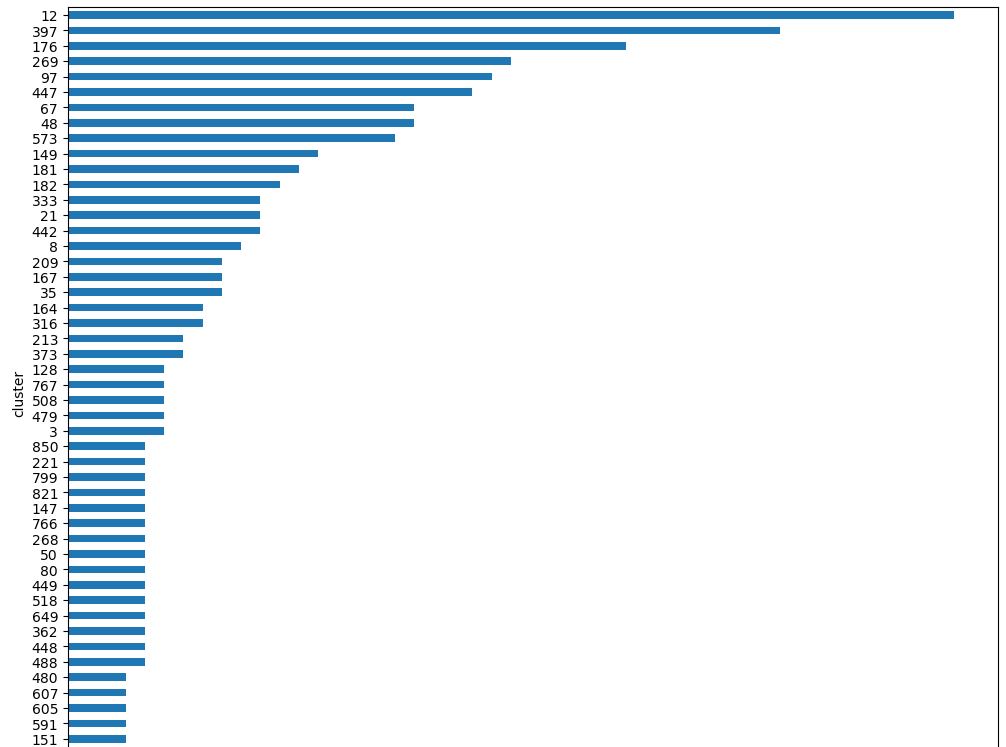

Blah blah blah, I do clustering, same as last time. But this time, I set my similarity_threshold very low, because I want to capture similar KINDS of things, not just similar examples of the same thing.

df['cluster'] = cluster_text(df['claim'], similarity_threshold=0.3) # reducing to capture broader topicsI can explore the cluster breakdown.

And I can loop through the clusters, reading them from large to small. Easy.

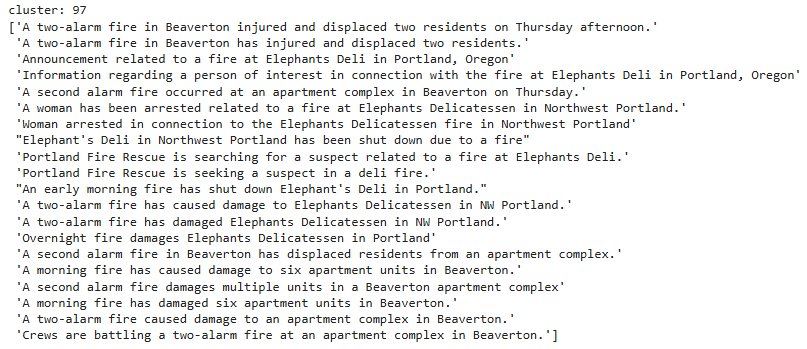

Real stuff gets picked up in these clusters.

And there is scarier stuff than this that I don’t want to show on my blog, but you can see it in the code outputs. Most news is not good news.

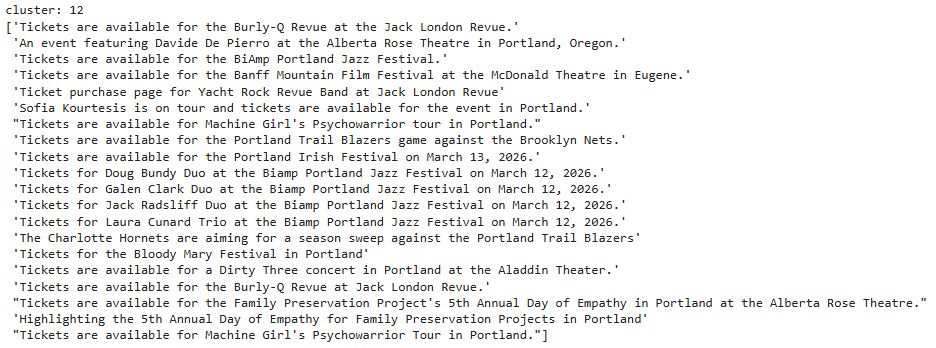

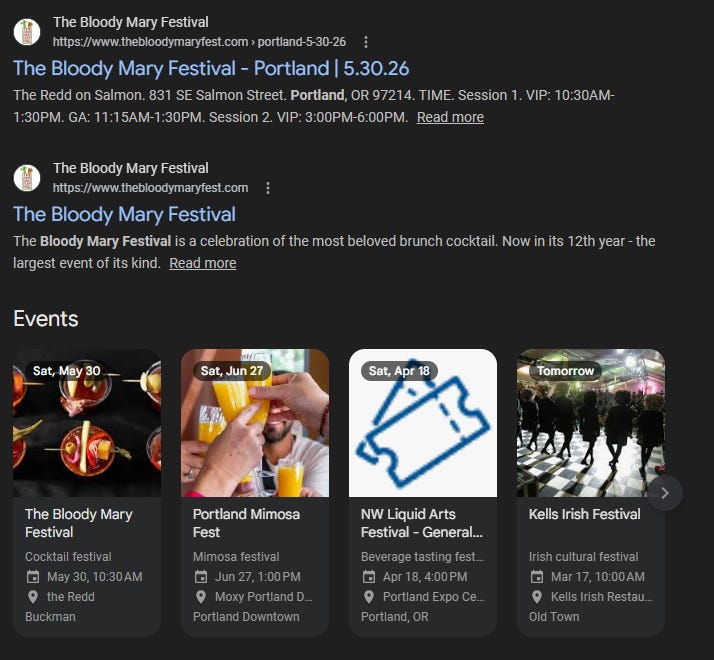

You can see some of the data arriving before the downstream GrooveSeeker automation picks it up. Neat. I want to go to a Jazz Festival. That sounds fun. Ooooh Bloody Mary Festival?! Are you kidding me?!

Daaaaaaaang. I had no idea. This happens with every OSINT investigation, but in OSINT you are often looking at the dark stuff. Verdant Intelligence is having the same “OMG!” impact on me naturally, and I am showing it to you as it is happening. I didn’t mean to be interrupted by this. Huh. Bloody Mary Festival. Hahaha. Outstanding. Haha.

What else do I see? Hmm.

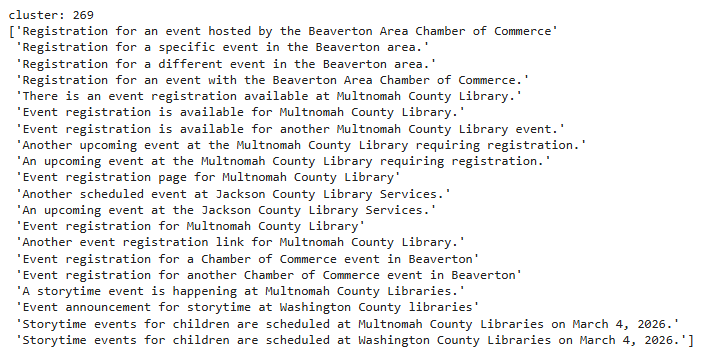

Neat. Bunch of stuff happening at the library. Chamber of Commerce! I need to go to their events! Cool!

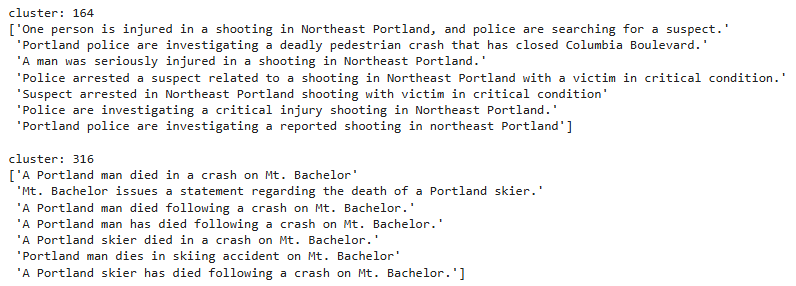

How about crime? What crimes have happened nearby?

I see that there is mention of a shooting in Northeast Portland, and other sad news.

And there is more. In the code, I only showed a few of the clusters, because I don’t want to just give everything away. This API is not free.

So, that’s it. It took five minutes to set up end-to-end OSINT for my local area, and I can do this anytime I want.

Cool. I like this. This is a useful tool, and I’m barely showing what this can do.

That’s it. Please subscribe. This is a combination of real-world AI and Data Science. You can do really creative and powerful stuff with this combination.

If you would like to buy access to this API, please email me at info@verdantintel.com

Are you facing the same issue that I am, where you have more data than you can easily derive value from? I provide data, but no one looks at it. So then, I decide to filter it down, but then I'm looking at manipulated data. Do you have something that will take all of your OSINT and perform an agnostic analysis, maybe an unapprent trends, linkages between disparate data, that stuff?