Day 63 of #100daysofnetworks

Happy New Year! GraphRAG with Style Transformation!

Hello everyone!

What an exciting week. On Christmas evening, I wrote about the creation of a Minimal GraphRAG for our dataset of 30 years of Artificial Life Research references.

That worked out really well.

Today, I am extending our GraphRAG, giving it a bit of style and personality.

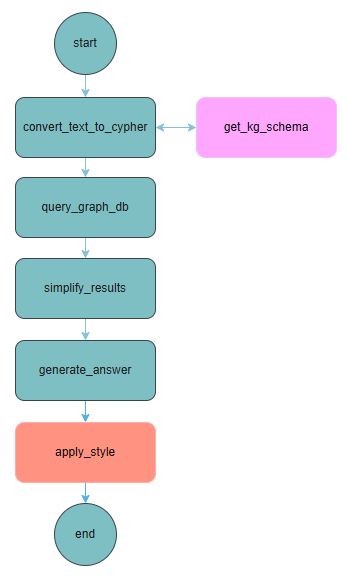

The ‘apply_style’ step was not in our previous approach. I began working on it immediately after finishing that article, and after a few hours of research and development, I am now happy with the results. I’ll show them in this article!

The steps do the following:

Convert a text input into a Cypher query. This is a crucial step, ensuring you pull relevant information that helps answer the input prompt or question.

The Cypher query is used to fetch data from the database.

Then the data is quickly simplified, improving all next steps.

Then the simplified data is used to generate an answer.

And finally, the answer undergoes a style transformation to be more easily read.

This is all logical and common sense. Someone has a question. You get the information for them and you figure out the answer. Finally, as a rule of thumb, you should know your audience and communicate to them in a way so that your words will land effectively.

But style transformation is an interesting thing, with various real-world uses. I write like an engineer, and sometimes I need my words to sound a bit more like the Marketing Department, or a bit more businessy.

It all boils down to knowing your audience. Know your users.

Currently, I am my user, so I want this to be useful to me. I didn’t love the previous outputs, so I gave this some style.

Before and After Comparison

I’ll just quickly show a quick before and after, before I do the full show-and-tell.

Previously, this was the output:

what papers are about jupiter?

There's a bunch of interesting research about Jupiter and how it interacts with both Earth and other celestial bodies. Much of it focuses on whether Jupiter acts as a protective shield against impacts from asteroids and comets or if it actually increases those risks. Some studies even look at how Jupiter’s gravity affects Earth’s climate over long periods and the origins of certain types of exoplanets related to Jupiter-like characteristics.

**References:**

- Horner & Jones (2008) - Jupiter - friend or foe? I: the asteroids [link](http://arxiv.org/pdf/0806.2795v3)

... saving space ...

- Martin et al. (2011) - A binary merger origin for inflated hot Jupiter planets [link](http://arxiv.org/pdf/1102.3336v3)

Tip: Searching by year or year ranges may yield more useful results.And now it looks like this:

what papers are about jupiter?

Here's a concise summary of the papers about Jupiter that I found for you.

**Papers:**

1. **Jupiter - friend or foe? III: the Oort cloud comets**: This paper looks at how the presence of a giant planet affects the impact rate from Oort cloud comets. It finds that having a giant planet like Jupiter reduces the likelihood of impacts on Earth, suggesting that the rate increases without its presence.

Horner, J., Jones, B. W., & Chambers, J. (2009). Jupiter - friend or foe? III: the Oort cloud comets. http://arxiv.org/pdf/0911.4381v1

... three more; saving space ...

5. **A binary merger origin for inflated hot Jupiter planets**: This one suggests that hot Jupiters might form from the mergers of low-mass stars. It looks at how the properties of these planets connect to their formation processes and what that means for their occurrence around different stars.

Martin, E. L., Spruit, H. C., & Tata, R. (2011). A binary merger origin for inflated hot Jupiter planets. http://arxiv.org/pdf/1102.3336v3

This list covers various facets of Jupiter's impact on our solar system, from its role in shaping Earth to the mysteries of gas giants.A few improvements:

The GraphRAG now writes an individual summary for each paper in the style I have given it. Each paper already had a summary; the summary has been translated into a style that works well for me. I could have just as easily had it write in reverse, or Pig Latin, or be extremely sarcastic. But I wanted this to be useful to me, so I gave it a particular writing style.

I asked the refences to appear in APA formatting. I said exactly that so that it would stop adding extra text like [link] that are just junk. You’ll see it in the first example. I haven’t confirmed that it is valid APA formatting, but it looks really clean now, so I like it.

So, I get summaries about the individual papers, instead of one summary that doesn’t say anything of value, and I can see cleaner context. Finally, this is in a voice that works for me. Style translation mission accomplished.

Show-and-Tell

The database is wonderful, and I am enjoying exploring. This is such a useful and powerful research tool. Artificial Intelligence relates to Artificial Life, so there is so much Machine Learning history in this dataset. It is fascinating. But here’s something else I thought was neat!

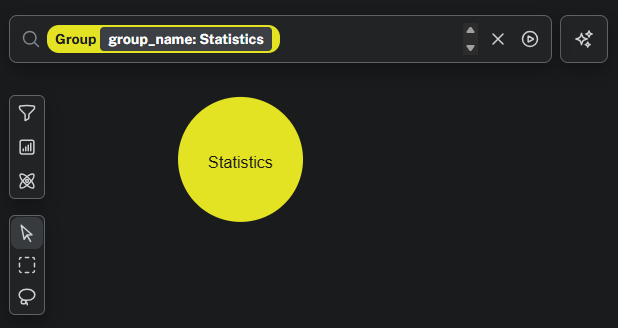

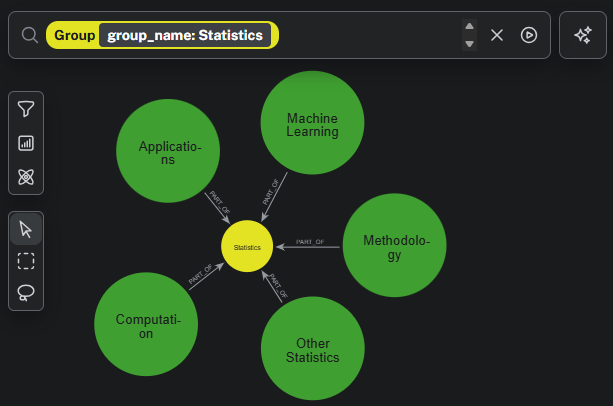

If I search the database for Statistics, there’s one node.

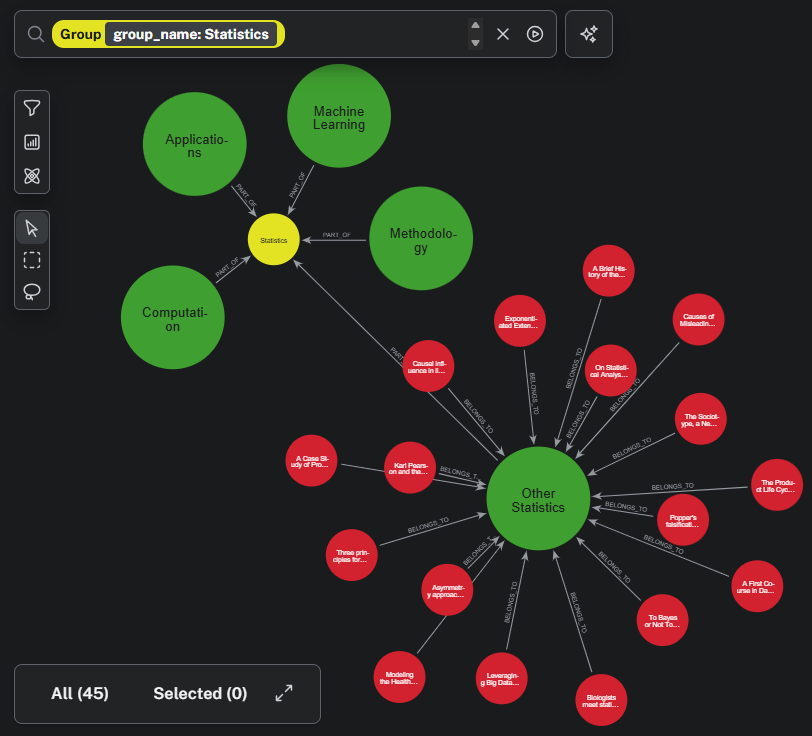

If I expand that, there’s five categories, and one of them is Machine Learning. All of you should be curious about what that node points towards. Answer: It points to HUNDREDS of research papers about Machine Learning.

But that oddball, right there… “Other Statistics”

Neat… I wanna explore that.

Really, really cool. But today, we’re talking about GraphRAG. There’s a lot of great data in the database. Let’s use AI to learn more. I’ll just show outputs, from now on.

Teleportation

what papers are about teleportation from the years 2010 to 2020?

Here are some interesting papers about teleportation from 2010 to 2020:

**Papers:**

1. **Bringing quantum mechanics to life: from Schrödinger's cat to Schrödinger's microbe**: This paper dives into the shift from quantum to classical mechanics. It reviews ideas related to quantum superpositions and entanglement, and it discusses proposals for teleporting microorganisms. It's a blend of quantum physics and biology that's quite fascinating.

Zhang-qi Yin, T., & Li, T. (2016). Bringing quantum mechanics to life: from Schrödinger's cat to Schrödinger's microbe. http://arxiv.org/pdf/1608.05322v2

2. **A Simulation for Neurophotonic Quantum Computation in Visual Pathways**: This paper explores the Copenhagen Interpretation of quantum theory and presents a simulation using teleportation circuits in photons within neurons. It discusses potential macroscopic quantum states in the brain and connects to how visual information is processed.

Valian, H., Bassereh, H., Barkhordari, A., & Salari, V. (2014). A Simulation for Neurophotonic Quantum Computation in Visual Pathways. http://arxiv.org/pdf/1412.7551v2

3. **Toward Formalizing Teleportation of Pedagogical Artificial Agents**: Here, the authors look at creating artificial agents that can "teleport" between different forms during student interactions. They take initial steps to formalize this idea to ensure a consistent experience for students across different embodiments.

Angel, J., Govindarajulu, N. S., & Bringsjord, S. (2018). Toward Formalizing Teleportation of Pedagogical Artificial Agents. http://arxiv.org/pdf/1804.03342v1

4. **An artificial atom locked to natural atoms**: This paper reports on an artificial atom emitting photons that are locked to the energy of a natural atom. It's crucial for quantum information applications, including teleportation, as it spotlights the challenges of integrating artificial and natural systems.

Akopian, N., Trotta, R., Zallo, E., Kumar, S., Atkinson, P., Rastelli, A., Schmidt, O. G., & Zwiller, V. (2013). An artificial atom locked to natural atoms. http://arxiv.org/pdf/1302.2005v1

These papers highlight different aspects of teleportation, covering quantum physics, neuroscience, and AI.Machine Learning

tell me about three papers that are about Machine Learning from 2020 to 2023?

Here are three interesting papers about Machine Learning published between 2020 and 2023:

**Papers:**

1. **Machine Learning for Software Engineering: A Systematic Mapping**: This paper looks at how machine learning is being adopted throughout the software engineering life cycle. By analyzing 227 articles, the authors create a taxonomy that sorts various machine learning techniques based on where they can be applied in software engineering, aiming to help academics and practitioners see the benefits of integration.

Shafiq, S., Mashkoor, A., Mayr-Dorn, C., & Egyed, A. (2020). Machine Learning for Software Engineering: A Systematic Mapping. http://arxiv.org/pdf/2005.13299v1

2. **Machine Learning and Deep Learning**: This article provides a solid overview of both machine learning and deep learning. It discusses how these technologies automate the process of building analytical models and outlines benefits over traditional methods. The paper also highlights challenges around implementing intelligent systems, particularly in electronic markets and how humans interact with machines.

Janiesch, C., Zschech, P., & Heinrich, K. (2021). Machine Learning and Deep Learning. http://arxiv.org/pdf/2104.05314v2

3. **Modelling Concurrency Bugs Using Machine Learning**: This research dives into how machine learning can be used to find concurrency bugs in parallel programming. It addresses the difficulties in developing automatic detection tools and shows how machine learning can improve their accuracy. The study employs a synthetic dataset to test different models and approaches for detecting these types of bugs.

Begu, T. R. (2023). Modelling Concurrency Bugs Using Machine Learning. http://arxiv.org/pdf/2305.05531v1

These papers highlight different dimensions of machine learning, from software engineering practices to deep learning fundamentals and even specific challenges in programming.Random Papers about a Topic

tell me about a random paper that is about Machine Learning from 2020 to 2023?

Here's a breakdown of that paper you mentioned.

**Papers:**

1. **Evaluating feasibility of batteries for second-life applications using machine learning**: This paper by Aki Takahashi, Anirudh Allam, and Simona Onori presents a machine learning approach to assess whether retired electric vehicle batteries can be reused or should be recycled. The authors developed a method that uses statistical feature generation from battery measurements and Gaussian Process Regression with bagging for evaluation. Their validation on a dataset of over 200 cells showed impressive accuracy, with performance errors under 1.5% in challenging cases.

Takahashi, A., Allam, A., & Onori, S. (2022). Evaluating feasibility of batteries for second-life applications using machine learning. http://arxiv.org/pdf/2203.04249v2This one actually really impressed me. It correctly used the rand() function to pull one random paper about Machine Learning that was written between 2020 and 2023 and tell me about it.

Causal Inference

tell me about seven papers that are about Causal Inference

Here's a solid list of papers on causal inference that cover various challenges and methods in the field.

**Papers:**

1. **Causal Inference with Unmeasured Confounding from Nonignorable Missing Outcomes**: This one tackles the tricky issue of getting causal effects from studies where some confounders aren’t measured and there are missing outcomes. It introduces an auxiliary variable for better identification and shows how to deal with unmeasured confounding using causal diagrams.

Zheng, R. (2023). Causal Inference with Unmeasured Confounding from Nonignorable Missing Outcomes. http://arxiv.org/pdf/2305.07226v1

2. **Causal Independence for Knowledge Acquisition and Inference**: Heckerman focuses on how to use a temporal belief-network to make knowledge acquisition easier in probabilistic models. Though it’s less general than other methods, it streamlines inference without the need for variables that can’t be measured.

Heckerman, D. (2013). Causal Independence for Knowledge Acquisition and Inference. http://arxiv.org/pdf/1303.1468v2

3. **On Measurement Bias in Causal Inference**: Pearl discusses the impact of measurement errors on causal inference and offers algebraic and graphical methods to handle bias caused by these errors, especially for partially observable confounders in different types of models.

Pearl, J. (2012). On Measurement Bias in Causal Inference. http://arxiv.org/pdf/1203.3504v1

4. **Degree of Interference: A General Framework For Causal Inference Under Interference**: Ohnishi and colleagues critique the no-interference assumption often made in causal inference. They propose a framework called “degree of interference” that allows for more nuanced inferences in complex experimental designs.

Ohnishi, Y., Karmakar, B., & Sabbaghi, A. (2022). Degree of Interference: A General Framework For Causal Inference Under Interference. http://arxiv.org/pdf/2210.17516v2

5. **Causal Inference with Deep Causal Graphs**: This paper introduces Deep Causal Graphs, making them useful for parametric causal modeling. The authors highlight how neural networks can effectively model causal distributions, which is beneficial for counterfactual estimations.

Parafita, Á., & Vitrià, J. (2020). Causal Inference with Deep Causal Graphs. http://arxiv.org/pdf/2006.08380v1

6. **Conditioning Methods for Exact and Approximate Inference in Causal Networks**: Darwiche introduces two new algorithms that improve causal network inference: dynamic conditioning for efficiency and B-conditioning for balancing accuracy with computation time. Experimental results showed they work well.

Darwiche, A. (2013). Conditioning Methods for Exact and Approximate Inference in Causal Networks. http://arxiv.org/pdf/1302.4939v1

7. **Inference with Causal Independence in the CPSC Network**: Zhang applies a causal independence inference method to the CPSC network, successfully navigating various observational queries. This paper demonstrates the robustness of the algorithm in practical applications.

Zhang, N. L. (2013). Inference with Causal Independence in the CPSC Network. http://arxiv.org/pdf/1302.4993v1

These papers provide a range of techniques and methodologies that can help in understanding the nuances of causal inference.Categories Related to a Topic

what categories are related to papers about jupiter?

Papers about Jupiter span a few key categories in astrophysics, mainly tackling topics in planetary science and related fields. These categories help researchers dive into different aspects of Jupiter and its role in the solar system.

**Categories:**

1. **Earth and Planetary Astrophysics (astro-ph.EP)**: This covers everything related to planets, from their formation and physics to planetary atmospheres and astrobiology. It’s the main category for papers focused on Jupiter, looking at its moons, rings, and its influence on the solar system.

2. **Solar and Stellar Astrophysics (astro-ph.SR)**: While this primarily focuses on stars, some research intertwines these topics with planetary systems, providing insights into how stars and their planets interact.

3. **Instrumentation and Methods for Astrophysics (astro-ph.IM)**: This involves the tech used to study celestial bodies, including telescopes and observational methods. Papers in this category often discuss new tools for investigating Jupiter or improve analysis techniques for collected data.

4. **Cosmology and Nongalactic Astrophysics (astro-ph.CO)**: Though it’s more general and not focused specifically on planets, this category offers theoretical frameworks that can enhance our understanding of Jupiter's context in the universe.

5. **Symplectic Geometry (math.SG)**: This category relates to the mathematical theories behind dynamical systems, which can be relevant when studying the gravitational interactions within Jupiter's system.

Overall, Earth and Planetary Astrophysics is the core focus for Jupiter-related research, but other categories can shed light on different aspects, depending on the angle of the study.Category Subtraction

what categories are there other than optics??

There’s a wide range of research categories beyond optics that cover various disciplines. These categories include everything from mathematics to environmental sciences and focus on specific topics within those fields. This diversity allows researchers to explore numerous interests and areas of expertise.

**Categories:**

1. **Algebraic Geometry** (math.AG): Focuses on algebraic varieties and their properties, including concepts like schemes and moduli spaces, linking algebra with geometry.

2. **Artificial Intelligence** (cs.AI): Encompasses various AI fields, excluding areas like machine learning or robotics, and covers theorem proving and knowledge representation.

3. **Adaptation and Self-Organizing Systems** (nlin.AO): Studies systems that adapt and self-organize, including stochastic processes and statistical physics themes.

4. **Cell Behavior** (q-bio.CB): Researches processes like cell signaling, morphogenesis, and interactions between different cell types or organisms.

5. **Combinatorics** (math.CO): Deals with discrete structures, graph theory, and related problems, often in optimization and game theory contexts.

6. **Performance** (cs.PF): Covers techniques for measuring and evaluating performance in computing systems, including simulation methods.

7. **Dimensional Systems and Neural Networks** (cond-mat.dis-nn): Explores systems with complex properties like disorder and investigates aspects of neural networks.

8. **Medical Physics** (physics.med-ph): Combines physics and medicine, focusing on radiation therapy and imaging technologies.

9. **Data Structures and Algorithms** (cs.DS): Focuses on the design and analysis of data structures, which are fundamental in computer science.

10. **Quantum Gases** (cond-mat.quant-gas): Studies properties of ultracold gases, such as Bose-Einstein condensates, using quantum mechanics principles.

11. **Soft Condensed Matter** (cond-mat.soft): Looks into materials like polymers and colloids, which exhibit unique properties in soft matter physics.

12. **Neural and Evolutionary Computing** (cs.NE): Investigates neural networks and evolutionary algorithms, emphasizing adaptive behaviors in computing.

13. **Statistics Theory** (math.ST): Covers fundamental areas in statistics, including various methods for statistical inference and analysis.

14. **Machine Learning** (cs.LG): Examines all aspects of learning algorithms, from supervised to unsupervised techniques.

15. **Quantum Physics** (quant-ph): Explores diverse topics associated with quantum mechanics and its applications.

16. **Graph Theory** (cs.GR): Focuses on graph structures and their properties, playing a crucial role in computer science and mathematics.

17. **Mathematical Finance** (q-fin.MF): Discusses mathematical tools and models applied in finance, especially stochastic processes.

18. **Information Theory** (cs.IT): Deals with the quantification of information and coding principles, pivotal in data transmission.

19. **Dynamical Systems** (math.DS): Investigates differential equations and mechanics, focusing on system behavior over time.

20. **Cryptography and Security** (cs.CR): Encompasses methods for secure communication and data protection in information technology.

21. **Complex Variables** (math.CV): Studies functions of complex variables and their applications in mathematical analysis.

22. **General Physics** (physics.gen-ph): Covers broad principles and theories in physics, sometimes bridging multiple subfields.

23. **Environmental Sciences** (stat.AP): Looks at environmental topics often intersecting with applications of statistical analysis.

24. **Computers and Society** (cs.CY): Explores the implications of computing on society, such as ethical considerations and social impact.

25. **Solid State Physics** (cond-mat): Studies various properties and phenomena in solid materials, an essential part of condensed matter physics.

These categories offer diverse opportunities for researchers to engage with different scientific areas.Very nice! It did the subtraction and aimed for completeness in answering. I can’t stand it when AI confidently gives a tiny part of the answer as if it were the whole thing. I am explicit to mine that they must aim for completeness.

The Moon

what papers are about Earth's moon?

Here's a collection of papers discussing Earth's moon, covering everything from its formation to its influence on life.

**Papers:**

1. **Distribution of dust ejected from the lunar surface into the Earth-Moon system**: This paper dives into how dust particles escape from the Moon and their distribution within the Earth-Moon system. It details computer simulations of dust trajectories and finds that about 2% of ejected particles can escape lunar gravity, forming an asymmetric torus in space.

Yang, K., Schmidt, J., Feng, W., & Liu, X. (2022). Distribution of dust ejected from the lunar surface into the Earth-Moon system. http://arxiv.org/pdf/2204.01040v1

2. **How common are Earth-Moon planetary systems?**: The authors look into how many terrestrial planets might have massive moons, arguing that at least 1 in 12 has one. They base this on simulations of how moons form through giant impacts, which has big implications for understanding planetary systems.

Elser, S., Moore, B., Stadel, J., & Morishima, R. (2011). How common are Earth-Moon planetary systems? http://arxiv.org/pdf/1105.4616v1

3. **LOUPE: Observing Earth from the Moon to prepare for detecting life on Earth-like exoplanets**: This paper discusses LOUPE, a spectro-polarimeter designed to observe Earth from the Moon. It aims to help gather data on Earth's sunlight reflections, which can be useful for detecting potential signs of life on exoplanets.

Klindžić, D., Stam, D. M., Snik, F., Hoeijmakers, H. J., Willebrands, M., Karalidi, T., Pallichadath, V., Dijk, C. N. van, & Esposito, M. (2020). LOUPE: Observing Earth from the Moon to prepare for detecting life on Earth-like exoplanets. http://arxiv.org/pdf/2007.16078v2

4. **A New Disintegrative Capture Theory for the Origin of the Moon**: This paper presents a different take on the Moon's origin, suggesting it was captured by Earth rather than formed from a collision. It also looks at the implications of this theory, including a possible younger age for the Moon and its minerals.

Noerdlinger, P. D. (2012). A New Disintegrative Capture Theory for the Origin of the Moon. http://arxiv.org/pdf/1204.0980v2

5. **The moon and the origin of life**: This review discusses how Earth's large moon may relate to the emergence of life. It explores ideas about how the Moon stabilizes Earth's tilt and affects ocean tides, which could create conditions favorable for life to develop.

Benn, C. R. (2001). The moon and the origin of life. http://arxiv.org/pdf/astro-ph/0112399v1

These papers provide a range of insights into the Moon's dynamics, formation theories, and its impact on life on Earth.Very nice! I am really impressed.

Reaction, and What’s Next?

I feel really good about this. This is doing what I want. The Artificial Life dataset has become “alive” to me in that I can interact with it in ways I never could before. There’s a Graph Database that I can explore visually, and there’s a minimal GraphRAG I can type to. I like the answers! There’s nothing blocking me from using this as it is right now!

But there is room for improvement:

I could literally give this a voice and have it speak answers to me. This is very easy to do.

I want to be able to use much more complex prompts, that have multiple steps. This is very linear thinking, so far. A→B. I want network thinking. I want this to take a prompt, really understand it, convert it into a DAG of steps, and then do them. That is what is in my mind.

I want to collapse the bottlenecks and make it faster.

I want to do testing and validation to know how well it is actually doing, not how well it seems to be doing.

Here’s what I don’t want:

I don’t want to spend time improving keyword search. It works well enough.

I don’t want to spend time creating a bunch more node and edge types. It works well enough, and simple is faster than complicated. I only add more when needed. “Everything should be as simple as possible, but not simpler.” (Einstein)

I don’t want to spend any time on things that do not add noticeable value or usefulness. If it doesn’t lead to better or better+faster answers, it’s not urgent. If it only leads to faster answers, it’s not urgent, because I care more about quality than I care about speed. I want to aim for precision AI, not more frustration.

So with that, I’m calling this good to go for Minimal GraphRAG for Artificial Life. It’s not perfect, and I’ll tinker with it, but it’s ready for me to write about how I did this.

I’ll be releasing some articles for paying subscribers that include code. I’ll be working on those, next. Paying subscribers can also have access to this database, and can use it for their own GraphRAG learning and experimentation.

We’re Not Out of Space, Yet!

I feel like at this point, Substack is usually complaining that I am almost out of space for an article, but it is not, today, so I’ll share more database screenshots!

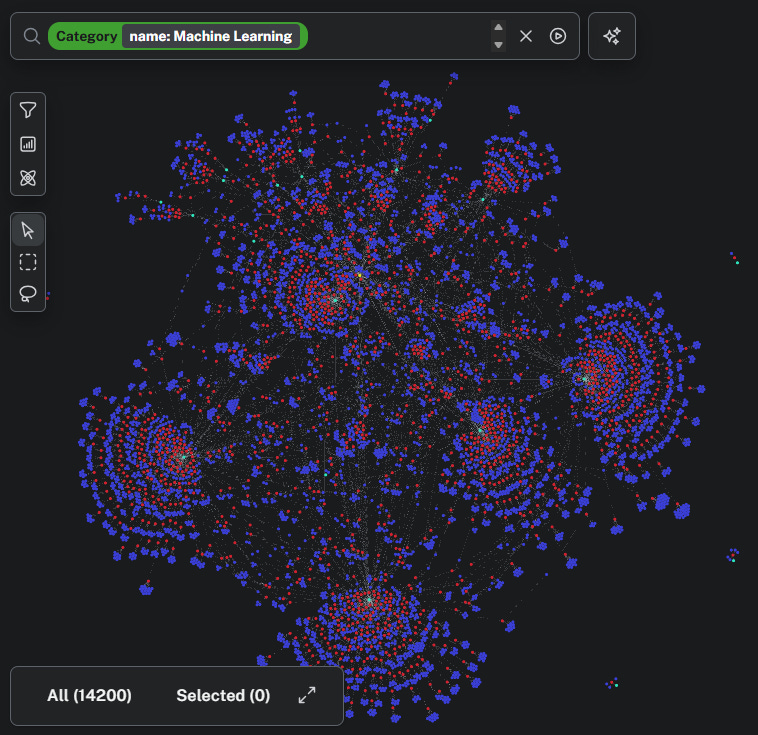

What do we have about Machine Learning?

Neat! The green dots are years, so it is clear that some years have a lot more activity than other years.

Ok, we are out of space! Thank you for reading today’s update!

Please Support this Work!

I have written over 60 articles for this series. Each one takes about four hours of research, and several pages of writing and editing. Here are some ways you can support the blog!

Please subscribe if you have not. This motivates me like nothing else!

LET’S DO BUSINESS. Reach out to me if you need data or AI help! Happy to help! You can read about the partners we are currently looking for here.

BIGGEST HELP to the BLOG: Please consider upgrading if you are a subscriber. Thank you to all current paying subscribers for making this research and development possible! You can have Database access and minimal GraphRAG code if you want it.

Please buy my book to understand how I think about Natural Language Processing and Network Science combined.

Please reach out to me if interested in training related to Data Science, Data Engineering, Network Science, Knowledge Graphs, Artificial Intelligence, or anything else I write about. Feel free to message me on Substack or on LinkedIn!

Feel free to hang out in the comments and have a good time!

We have come so far since the very first day of the very first #100daysofnetworks. I love writing for this series. Thank you for being a part of it!

Thank you so much for this work! I didn’t know this was possible before talking to you…it makes so many things possible. I’m going to have to read this again (maybe a few times) to fully grasp it…I’ll also send it to Gemini who will understand completely.

I wanted to give you some feedback, as I know what's it's like to throw words into the void. You probably have insight into how many folks are consuming your content, but specifically, I wanted to personally thank you for your efforts. Not every article is for me because it's above my head or there isn't any applicability to me in my life or my job, but I am learning new stuff. Please keep up the great work on this series. If you have the time and the inclination, could you help point me in the direction of more fundamental information on RAG (since you seem to be an expert)?